If you travel a lot, you’ve probably already experienced this – you’re in a hurry on your way to the airport trying to catch a flight, only to find out that your flight is delayed. Wasn’t it great to know when a flight will be delayed in advance? We can use past flight delay data to develop a classification model that predicts whether a flight will be on time or delayed in the future. This tutorial shows how this works by creating a flight-delay prediction model in the Azure Machine Learning Studio workbench.

The model uses a decision tree algorithm to predict whether a flight will be more or less than 30 minutes late. In this approach, the algorithm searches for patterns in the data of past flight connections and applies these patterns to classify flights into two classes: delayed and not delayed. Travel service providers use similar models to warn customers when a flight is likely to be delayed.

What is Flight Delay Prediction?

Flight delay prediction is the use of data and analytics to forecast whether a flight is likely to be delayed. This information can be used by airlines, airports, and passengers to plan and prepare for potential delays and to make informed decisions about travel plans. Models for predicting flight punctuality typically consider a range of factors, including historical data on past flight delays, weather conditions, the performance of the airline and the specific aircraft involved, and other relevant information. Machine learning algorithms are often used to analyze and process this data, and to generate predictions about the likelihood and duration of flight delays. These predictions can be made in real-time, as the flight is in progress, or in advance, based on the scheduled departure time and other factors.

Developing a Flight Prediction Model in Azure Machine Learning

In the following hands-on tutorial, we will develop a flight prediction model in Azure Machine Learning. To develop the model, we will carry out the following steps:

- Gather and prepare the data: We will load data on past flights, including information on their departure and arrival times, the airlines and aircraft involved, the airports and routes, and other relevant factors. This data needs to be cleaned and preprocessed to remove any errors or inconsistencies and to format it in a way that can be used by the machine learning model.

- Explore and analyze the data: This involves using tools and techniques, such as data visualization and statistical analysis, to understand the patterns and trends in the data and to identify any factors that may be associated with flight delays.

- Train a machine learning algorithm: We train a decision forest algorithm on the data to learn the patterns and relationships that exist in the data.

- Evaluate the model: We test our trained model on a separate set of data to see how accurately it can predict flight delays. This can be done using a range of metrics, such as accuracy, precision, and recall, to measure the performance of the model and to identify any areas for improvement.

- Deploy and use the model: We don’t cover this step explicitly. However, we broadly discuss how we could deploy the model into production.

Prerequisites: Signing Up for a Free Azure Account

In order to use the azure machine learning studio, you require access to a valid Azure subscription. Don’t worry, you can sign up for a free Azure account that offers beginners a sufficient number of credits. Simply follow these steps:

- Go to the Azure website (https://azure.microsoft.com/).

- Click on the “Try azure for free” button in the middle of the page. Then select “start free” on the next page.

- Enter your email address and password, and create a new Microsoft account, if you don’t already have one.

- Follow the on-screen instructions to complete the sign-up process, including verifying your email address and phone number.

- Once your account has been created, you can log in to the Azure portal (https://portal.azure.com/) using your Microsoft account credentials.

- From the Azure portal, you can access a range of services and resources, including machine learning, data analytics, and other cloud-based tools and services.

Note that the free Azure account includes a limited amount of free credit and services, which you can use to try out Azure and learn more about its capabilities. Once your free credit has been used up, you can choose to upgrade to a paid subscription to continue using Azure.

Step #1 Access Microsoft Azure Machine Learning Studio

This article uses Microsoft’s data science workbench Azure Machine Learning Studio (classic). There is also a new version of the Machine Learning Studio available, which provides more functionality but works in a similar way. The classic workbench provides comprehensive functions such as creating data pipelines, training and testing machine learning models, and publishing trained models as a web service via an API.

The studio is available via free trial access. You can create a free test account (8h valid) via “Sign up here” on Azure Machine Learning Studio or log in with an existing Microsoft Live account. After the successful login, you will see the experiments section:

Step #2 Importing Training Data into Azure ML

This tutorial will work with the CSV dataset FlightDelayData.csv

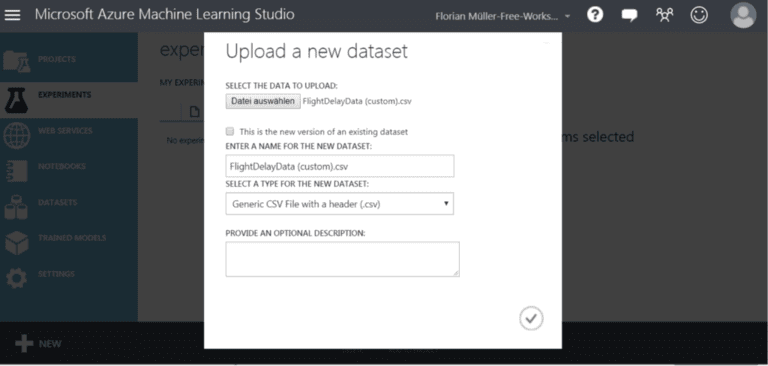

After downloading the dataset, you can import it into Azure Machine Learning Studio. Navigate to “Experiments” and click on “+ New” at the bottom left. On the following page, select “Dataset” on the left and then “Upload Local File.” Select the file FlightDelayData and confirm the upload.

Confirm the dialog to access the experiment workspace. Here, you will find a list of different modules (highlighted in light blue) to the left of the workspace. The modules provide all central functions in Azure Machine Learning Studio, such as transforming and exploring the data and using them in machine learning.

Step #3 Exploring the Data

Now that the data set is available in Azure Machine Learning, we will prepare it for its use in our flight delay prediction model. First, we will drag and drop the FlightDelayData dataset from “Saved Datasets” into the grey workspace of the experiment. Next, we will visualize the data by right-clicking on FlightDelayData –> “dataset” –> “Visualize” in the grey work area.

Clicking on the individual columns will give you an overview of the data sets’ characteristics and distribution. In the upper left corner, you can see that the dataset contains 135970 entries for flight connections. Each entry or line represents one flight. All flights took place in 2013. Furthermore, the data includes the departure and arrival locations of flights, time and day of departure and arrival, the airline, and the deviation from the planned take-off and landing time.

Step #4 Creating a Data Pipeline

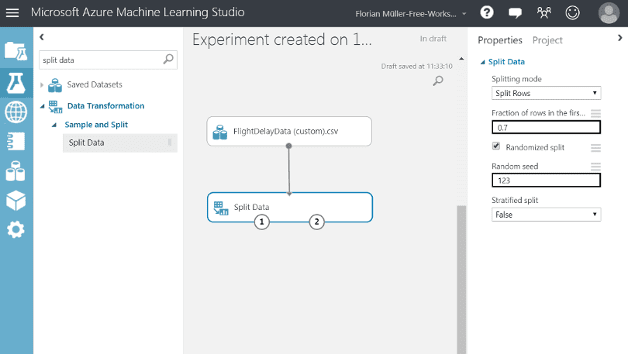

Before we can train the model, we need to split the data into two parts: train and test. We will use the first part of the data to train the Machine Learning model and the second to evaluate its predictions. This approach is known as supervised learning. Search for the “Split Data module” in the search list on the left and drag and drop it into the grey workspace to split the data. After this, you can connect the two modules by clicking on the output of the data set (FlightDelayData) and dragging it to the input of the “Split Data module” (see screenshot).

Next, we configure the Split Data module. Click on the module and make the following settings on the right side under “Properties”: Fraction of rows in the first output dataset: 0.7 and Random seed: 123.

In this way, we divide the data randomly in a 70/30 ratio. You can leave the others as they are.

(In practice, the compilation and preparation of the data are much more complex. I have already carried out some steps in advance.)

Step #5 Creating a Classification Model

Now we will create a classification model. Therefore, we will pull further models into the grey area of the workbench. Our model will use a boosted decision tree classifier. We can use this algorithm by dragging the module “Two-Class Boosted Decision Tree” into the grey workspace below the other modules. You can leave the settings of the module unchanged.

Next, we select the module “Train Model” and drag it into the grey workspace under the other modules. In the workspace, connect the output of the “Two-Class Boosted Decision Tree” module to the left input of the “Train Model” module.

Remember, we want to predict whether flights will be more or less than 15 minutes late. Select “Train Model” in the grey workspace and click on “Launch Column Selector” under Properties on the right. In the Column Selector, enter “ArrDel15” under “Column Name”. This column contains the so-called “prediction label,” which is the information on whether flights were more or less than 15 minutes late. Don’t forget to connect the left output of the Split Data module to the right input of the Train Model.

To evaluate the model’s predictions later, we will add a “Score Model.” We do this by selecting the module “Score Model” and dragging it into the workspace below the other modules. Finally, we need to create two connections. First, connect the left input of the “Score Model” with the left output of the “Train Model.” Second, connect the input node on the right of the “Score Model” to the output on the right of “Split Data,” which is the 30% of the original data set we use to test the model.

Step #6 Training the Model

Before we can train the model, we add the module “Evaluate Model” by searching it in the module tab and dragging it into the workspace. Finally, we connect the (left) input of the “Evaluate Model” with the output of the “Score Model.”

Once we have created the data transformation pipelines, we are ready to train the model. Start the training process by clicking the “Run button” in the dark bar at the bottom. It may take a few minutes until the process has finished. Meanwhile, you can monitor the processing progress by the green checkmarks shown on the modules.

Step #7 Evaluating Model Performance

So far, we have built a statistical model for flight delay prediction. Of course, we want to know how often our model is right or wrong with the predictions. Evaluating the performance of prediction models is thus an essential step in their development. To evaluate the model performance, right-click on “Evaluate Model” -> “Evaluation results” -> “Visualize.” Below you will find the receiver operating characteristic (ROC) of the trained model:

Let’s look at the different metrics at the bottom.

- The test data set contains 40791 flights, 30% of the original data.

- The model correctly predicted for 2098 flights that, they would have more than 15 delays (true positives).

- The model was wrong in 1310 cases (false positives).

- According to the model’s prediction, six thousand eight hundred twenty-five flights were more than 15 minutes delayed (false negatives).

- The model was correct in 30558 cases, estimating that these flights will have less than 15 minutes delays.

- Overall, the model is correct in about 80% of the cases (Accuracy = 0.801).

Finally, we look at the ROC curve. The curve illustrates the reliability of the model depending on the prediction threshold. The larger the area under the curve, the better the prediction model. The curve is sloped upwards and lies above the grey line, which means that the model works better than random assumptions. The gray diagonal line corresponds to a 50% chance to lie correctly, i.e., easy to guess. With a perfect correct model for every flight, the area would be 1.0.

Step #8 Deploying the Model as a Web Service

Now that we have our model available, of course, we would like to make it accessible to others. Let’s quickly discuss how we could deploy our model as a web service from the designer.

We won’t go into too much detail here and will only cover the basic steps for deploying a model. To deploy a machine learning pipeline in Azure Machine Learning, you need to convert the training pipeline into an inference pipeline. The pipeline can process prediction requests in real time or in batch mode. Deploying the model from the designer involves removing the training components, such as the Train Model and Split Data modules, and adding web service inputs and outputs to handle user requests. When you create an inference pipeline, azure ml stores the trained model as a Dataset component in the component palette. From there, you can access it under “My Datasets.” The saved trained model is then added to the pipeline, along with the web service input and output components. These components define the points where user data enters the pipeline and where the pipeline returns the results. Once you have deployed the model, other applications and systems can access it via a REST API.

Summary

We have created a flight delay prediction model in Azure Machine Learning Studio in this article. The model can predict with 80% certainty whether flights on specific routes will be more or less than 15 minutes late. This can help airlines, airports, and passengers to plan and prepare for potential delays and to make informed decisions about travel plans. We have also discussed how we can use the studio designer to deploy our model as web services to provide predictions in real-time or batch mode.

The prediction model is only the first version and still offers room for optimization. One option to further improve the model would be to add features such as the weather, the aircraft type, etc. Another option would be to test different algorithms and hyperparameters.

I hope this article was helpful. If you have remarks or questions, please write them in the comments.

Great content! Super high-quality! Keep it up! 🙂