ChatGPT, developed by San Francisco-based OpenAI, is a revolutionary AI chatbot that uses artificial intelligence to generate coherent responses to user inquiries. Unlike traditional search engines, ChatGPT uses artificial intelligence to generate coherent responses to user inquiries rather than providing standard answers. The results are surprisingly good and have created a massive worldwide sensation. Many think ChatGPT will drastically increase work productivity and revolutionize various industries in the coming years. While this may be true, the applications and integrations for large language models such as ChatGPT are yet to be built. By using the OpenAI API, you can integrate language models into your automated workflows and applications and potentially gain an edge over your competition. This article shows how this works with Python. We send requests to the OpenAI API and process the response.

If you want to know how ChatGPT was trained, this article on “few-shot learning” by OpenAI researchers provides a good overview.

Also: Mastering Prompt Engineering for ChatGPT for Business Use

What can you do with OpenAI Language Models such as ChatGPT?

Enough with the hype around ChatGPT; what can these models actually do for us? Well, it turns out OpenAI’s language models can be used for a wide range of helpful stuff. And with “stuff,” I mean natural language processing (NLP) tasks, such as:

- Text generation: The models can generate new text that is coherent, human-like, and creative, depending on the model.

- Language Translation: The models can accurately translate text from one language to another.

- Question answering: The models can answer questions based on a given context.

- Text summarization: The models can generate a summary of a given text.

- Text completion: The models can complete sentences and paragraphs in a given context.

- Language Modeling: The models can predict the next word in a sentence or generate a full text given a prompt.

- Sentiment Analysis: The models can analyze the sentiment of a given text, whether it is positive, negative, or neutral.

- Text classification: The models can classify text into different categories.

- Text generation for creative content such as poetry, music, stories, and more.

- Code generation: The models can generate code snippets.

These are just a few examples of the capabilities of OpenAI’s language models. It’s essential to keep in mind that the specific capabilities of each model will depend on the model you are using and the fine-tuning. In addition, the models can be fine-tuned for specific tasks, which is beyond the scope of this article.

This article provides more guidance and inspiration on industry use cases of OpenAI GPT-3.

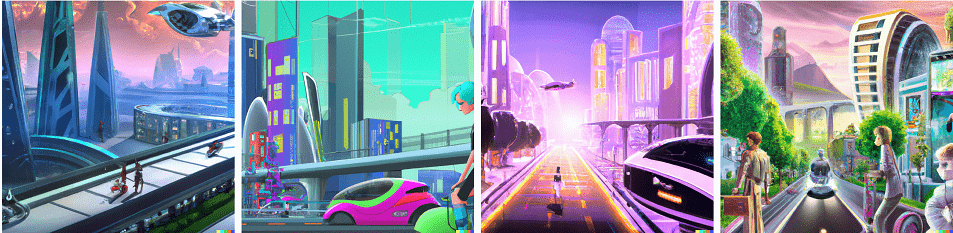

Example of How to Generate Dall-e Prompts Using ChatGPT

The following examples show that ChatGPT can even generate helpful prompts for image creation services such as Dall-e.

Request to ChatGPT: "generate a prompt for dall-e that represents the capabilities of large language models such as chatgpt" Response from ChatGPT: "Generate an image of a futuristic city with advanced technology, where people are seen interacting with AI-powered devices and transportation, while also showcasing the coexistence of nature and technology. The image should have a caption describing the scene in a coherent and creative manner."

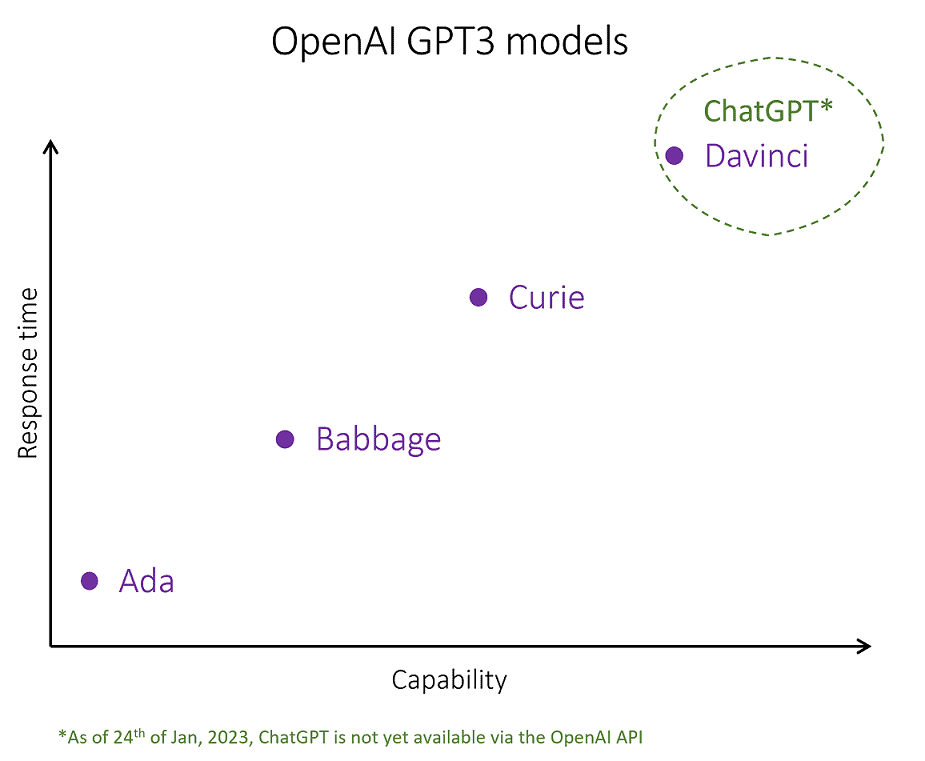

Overview of the OpenAI Language Model Family: Ada, Babbage, Curie, Davinci

OpenAI offers four language models based on GPT3. Currently, the model family consists of Ada, Babbage, Curie, and Davinci. These models are solely for text generation and completion While the models can generate code, there are separate codex models that have been fine trained for code completion.

It is worth mentioning that ChatGPT and Davinci are not identical. However, ChatGPT and Davinci are using the same GPT version, 3.5. As a result, the two models tend to produce comparable results.

The pros and cons of these models depend on the specific use case. However, here are some general advantages and disadvantages of OpenAI’s

| Model | Technical Name | Description |

|---|---|---|

| Ada | text-ada-001 | Ada is the fastest model. It performs well at tasks where creativity is more important than precision. It is suitable for applications such as chatbots, parsing text, simple classification, keywords, and address correction. Ada has the lowest costs. |

| Babbage | text-babbage-001 | Babbage excels at identifying salient patterns in text and utilizing them as a reference to generate new text. Additionally, it can effectively perform general classification tasks, such as categorizing industries, genres, and media content. However, Babbage is not as adept at creative tasks as other models. It can understand sufficient structure to generate simple plots and titles, but it may not be the best choice for more complex creative applications. |

| Curie | text-curie-001 | Curie is capable of many nuanced tasks like sentiment classification and summarization. It reaches almost the level of Davinci but has lower costs. It is also good at answering questions, performing Q&A, and as a general service chatbot. |

| Davinci | text-davinci-003 | Davinci, in its current version 003, corresponds to ChatGPT (GPT3.5). It is a versatile model that can perform a wide range of tasks, often with fewer specific instructions. It excels in tasks that require a deep understanding of content, such as summary generation and creative writing. However, it requires more computational resources and may not be as fast or cost-effective as other models. |

Using GPT-3 Language Models via the OpenAI API in Python

The following Python tutorial demonstrates how you can leverage the OpenAI language models via an API. Will first select and configure our OpenAI language model. Then we will send a request to the model and handle the response. You can easily integrate models into your application or workflow using the API. For example, to generate content, handle user requests in natural language, or answer general questions.

The code is available on the GitHub repository.

Register for an OpenAI API Key

To use the OpenAI API, you will first need to register for an API key by visiting the OpenAI website and creating an account. During the registration process, you will be required to provide some basic information about yourself and the project you are working on.

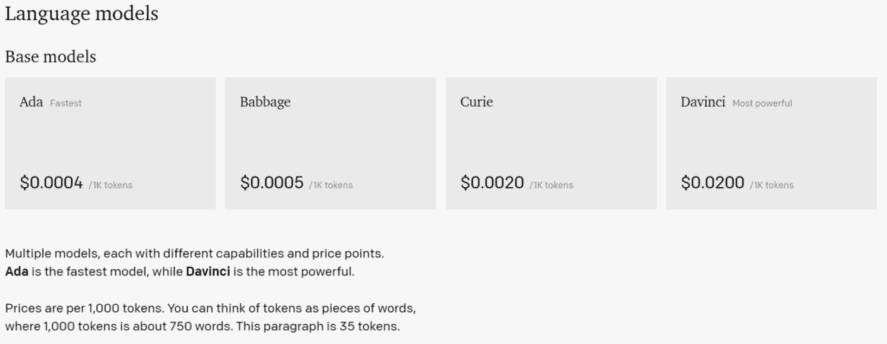

It’s important to note that while GPT-3 is currently available in a free test version, the OpenAI API itself is not free. If you only plan to send a few test requests, the costs will be minimal, but if you integrate the API with a successful application that runs in production, the costs can quickly accumulate.

However, it’s important to keep in mind that the use of the models may come with costs, and it’s crucial to monitor usage and keep track of costs to avoid unexpected charges. Each language model offered by OpenAI has a different price tag, and charges are based on the number of tokens sent to the model. To manage costs, you can set up a quota on the costs in the OpenAI portal under your profile. This will help you to keep an eye on the costs and keep them within your budget.

Technical Setup

Before diving into the code, it’s essential to ensure that you have the proper setup for your Python 3 environment and have installed all the necessary packages. If you do not have a Python environment, you can follow the instructions in this tutorial to set up the Anaconda Python environment. This will provide you with a robust and versatile environment that is well-suited for machine learning and data science tasks.

In this tutorial, we will be working with the OpenAI library. You can install the OpenAI Python library using console commands:

- pip install openai

- conda install openai (if you are using the anaconda packet manager)

Step #1 Imports and Model Configuration

We start by importing the necessary libraries and providing our API key for authorization. The API key can be stored in a separate file, such as api_config_openai.yml, and read into the code. Alternatively, you can also put the key directly in the code. However, be careful not to expose your key to the public.

With the API key set up, we use the OpenAI API function “Model.list()” to retrieve a list of models available. Once you receive the response from the API, you should be able to loop through the list of available models and print the model IDs.

import openai

import yaml

# set the API Key

yaml_file = open('API Keys/api_config_openai.yml', 'r')

p = yaml.load(yaml_file, Loader=yaml.FullLoader)

openai.api_key = p['api_key']

# show available models

modellist = openai.Model.list()

for i in modellist.data:

print(i.id)babbage ada davinci text-embedding-ada-002 babbage-code-search-code text-similarity-babbage-001 text-davinci-001 curie-instruct-beta babbage-code-search-text babbage-similarity curie-search-query code-search-babbage-text-001 text-davinci-002 code-cushman-001 code-search-babbage-code-001 text-ada-001 code-davinci-002 text-similarity-ada-001 text-davinci-insert-002 ada-code-search-code ada-similarity code-search-ada-text-001 text-search-ada-query-001 text-curie-001 text-davinci-edit-001 ... text-ada:001 text-davinci:001 text-curie:001 text-babbage:001

We can see that OpenAI offers a variety of models, including older versions of the Davinci model (for example, version 001, which uses GPT1). You should always try to use the highest version available.

Step #2 Sending a Request to the OpenAI API

Once you have your API key and have chosen a model, you can send a request to the API to access the model. We use the OpenAI library’s Completion.create() method to send a request to the OpenAI API. This function allows us to provide several parameters.

- engine: The model type, e.g., “text-davinci-003”

- prompt: The message sent to the model, e.g., “create a poem about blockchain.”

- max tokens: This parameter limits the number of tokens the model will create.

- n: Defines how many completions to generate for each prompt.

- stop parameter: This parameter allows us to define up to four sequences where the model will stop generating further tokens. The default is “None.”

- temperature. This parameter allows us to adjust the creativity of the response. The default is 0.7. Higher values can lead to more creative answers but will also increase the risk the answers are not correct.

We encapsulate the “openai.Completion.create” function in a function called “send_openai_request.” This function only takes three parameters “engine,” “prompt,” and “max_tokens.” This way, we reduce the code needed to test different models and prompts

# Define the request

def send_openai_request(engine, prompt, max_tokens=1024):

response = openai.Completion.create(

engine=engine,

prompt=prompt,

max_tokens=max_tokens,

n=1,

stop=None,

temperature=0.7

)

return responseStep #3 Send the Request

Next, we will send the request. We define the model to which we want to send the request and create an example prompt. Then we send the request to the API by calling the “send_openai_request function” created in the previous step.

When the API has processed our request, it will return a JSON response that includes the output generated by the model. We will need to process this response to extract the information we need.

Let’s make two requests using the same prompt, but with text-davinci-003 (GPT3.5, which corresponds to ChatGPT) and text-davinci-002 (GPT2).

# Define the prompt prompt = "What can you do with OpenAI?" model="text-davinci-002" # Generate a response response = send_openai_request(model, prompt) # Print the response send_openai_request print(response["choices"][0]["text"])

OpenAI is a research lab focused on building advanced artificial intelligence.

# Define the prompt prompt = "What can you do with OpenAI?" model="text-davinci-003" # Generate a response response = send_openai_request(model, prompt) # Print the response send_openai_request print(response["choices"][0]["text"])

OpenAI is a research and development organization dedicated to advancing artificial intelligence (AI) technologies. With OpenAI, you can use AI to develop a wide range of applications, including natural language processing (NLP), computer vision, robotics, reinforcement learning, and more. OpenAI also provides tools and resources for developers to create and train AI models, such as OpenAI Gym, a toolkit for reinforcement learning and OpenAI Five, a platform for training AI in StarCraft II. Additionally, OpenAI offers education and research opportunities for individuals interested in the field of AI.

As you see, the response from “text-davinci-003” is much more comprehensive than the response from “text-davinci-002.”

Summary

This article has provided an overview of how to use OpenAI’s language models via the API in Python. We have discussed the process of obtaining an API key, making requests to the API, and working with the returned data. We have also shown how to use the Davinci 003 model ( which corresponds to ChatGPT), to generate text and answer prompts.

The possibilities of OpenAI’s language models are endless. Whether you’re a developer, researcher, or data scientist, the API provides a powerful tool for natural language processing tasks. Thanks to GPT-3.5 (ChatGPT) and other models, it has become easy to generate human-like text, answer questions, and perform other language-related tasks, with just a few lines of code. I am convinced that we will see a wave of exciting applications in the coming years – many use cases are yet to be invented.

I hope this article has provided a useful introduction to working with OpenAI’s language models in Python and that you will continue to explore the full range of capabilities offered by the API.

If you have any questions, let me know in the comments. And if you want to learn how to automate AI-art generation with DALL-E and ChatGPT, check out this article.

Thanks for sharing. I read many of your blog posts, cool, your blog is very good.

Thanks for your great article. I am wondering if you can help with the guide for training the model using my own document?